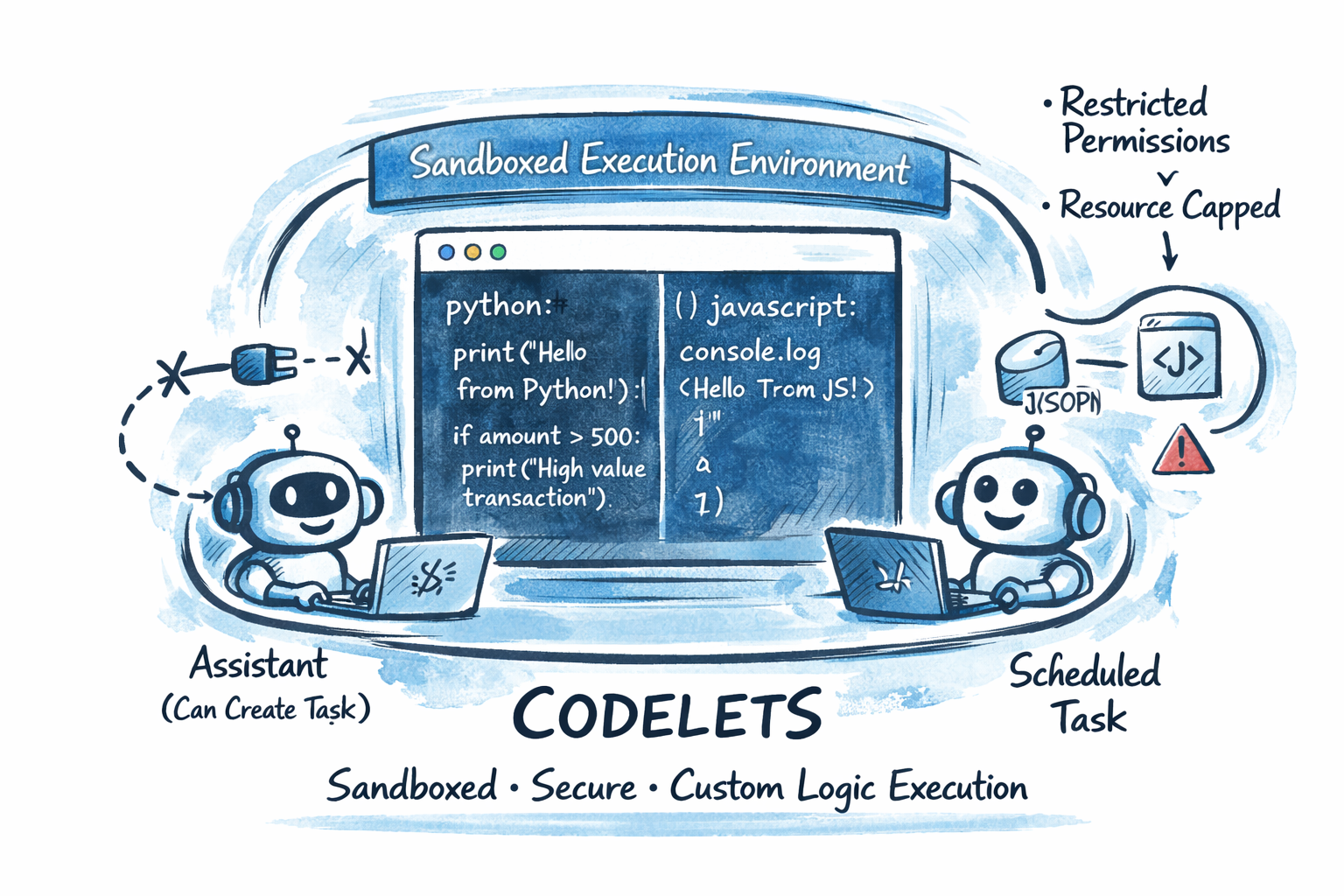

What codelets are

Codelets are user-defined and versioned. They sit in a registry, then run through dedicated execution endpoints.

That gives teams a dedicated extension layer for:

- deterministic calculations and routing logic,

- data cleanup and transformation before downstream steps,

- task and webhook automation actions,

- structured programmatic calls into platform operations and approved integrations.

The registry defines each codelet, while execution handles running it. Context and SDK support can be enabled for trusted cases where platform operations are required.

Why this matters

Why this matters

Separation of concerns

use LLMs for judgment, and codelets for control flow and calculation.

Predictable behavior

execution constraints are explicit (timeouts, memory, API budgets, network rules).

Repeatability

versioning gives reliable behavior over time.

Safer extension

policy controls determine what each codelet can do instead of open-ended scripting.

Built for the operational path

codelets are invoked from workflows, schedules, and webhook flows.